[Rebelle-Sante:] Essentially, what do you think of the criticisms of Gilles-Éric Seralini's study?

[Deheuvels:] I studied Professor Séralini's article in great detail. He speaks of toxicology from an experiment on rats of the strain Sprague-Dawley. He has been criticized, for example, for using this strain of rats that is said to be susceptible to develop tumors. However, it happens that the same strain of rats is used by absolutely everyone, including, moreover, industries that want to demonstrate the safety of their processes. So here is an example of criticism, which is absolutely ridiculous.

Professor Bach voiced another criticism of this study in my presence. He told me: "It is not right that Gilles-Éric Seralini has used young rats. He should have started his investigation with adult rats''. I told him that if we had started the research with 6 months old rats, most of them would have died before the end, given their average life expectancy of two years. It would therefore be pointless to carry out a study of this length. Here is another example of rash judgement, with no solid foundation, aiming to destroy this piece of work.

There are several types of statistical analysis. Gilles-Eric Séralini's study is not a certification study seeking to demonstrate that certain substances are harmful, but rather a piece of exploratory research to guide future investigations.

Initially, Séralini did not know what he would find, so he designed his experiment, reproducing almost exactly the protocol already used to put NK603 maize on the market, while increasing its duration, and multiplying the analysis of different biomarkers. He obtained a large set of digital data that enabled him to present a number of findings.

Statistical methods aiming to demonstrate hypotheses (such as the harm, or the reverse, the safety of a product) are called tests. And tests are only a drop in the ocean of statistical methods. Very often there is no universal method allowing us to design tests on large arrays of complex data (such as those based on the observation of functions). We can do it for a limited number of numerical observations, but we do not know how to achieve the operation when the numerical results available are numerous (as, for example, in Séralini's investigation, where more than 50 biomarkers were measured for each animal at different moments of the study).

In such a context, it is natural to seek to describe first the phenomena observed without aiming to demonstrate the existence of particular effects using hypothesis tests. Many articles in statistics are limited to the description of a phenomenon where the statistician says: "I see the effects," without providing a demonstration that these effects can be reproduced.

Criticizing Gilles-Eric Séralini because he sometimes says "I see that…", and he does not demonstrate, is witch hunting. The purpose of exploratory research is to introduce legitimate suspicions, the confirmation of which can only be achieved by further certification studies that can only come afterwards. It is outrageous to forcefully require proof of these effects, particularly from the outset, and establish this once and for all. It does not comply with professional ethics, and this is not what usually happens.

[Rebelle-Sante:] Recently you received the raw data from Gilles-Eric Séralini's study, do they confirm the validity of the study?

[Deheuvels:] In the first place, I got a count of the animals with and without tumours. The basic analysis of these tables shows that the differences between groups are statistically significant.

Thus, it is not possible to conclude that the two groups of 10 rats are homogenous, when the first has 2 animals with tumors, and the second, for example 8. It is obvious that these tables show statistically significant differences.

It is only recently that I received more complete data, which I have committed to keep confidential. Nevertheless, I can confirm the existence of statistically significant differences in observation data other than counts. It seems to me that if they were not identified by the agencies, which may have processed this data, it is seemingly due to the use of insufficiently sensitive methods. It is easy not to see anything through an optical instrument, the focus of which one has not sought to adjust.

[Rebelle-Sante:] What do you think about the Academy's probity?

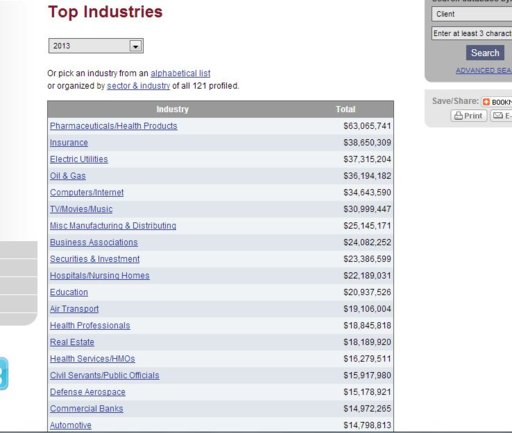

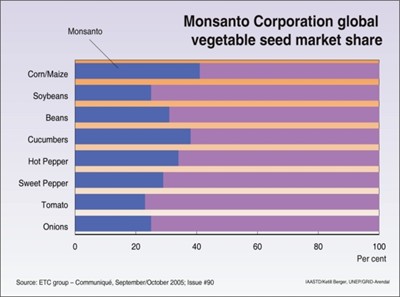

[Deheuvels:] This case shows the pressures that are applied to manipulate the Academy, and to transform it into a lobbying tool. For me, the Academy must remain a forum where divergent views can be freely expressed and coexist. Seeking to find a consensus on everything is not scientific. The truth cannot be decided by a vote, and trying to manipulate it by dishonest processes is unhealthy. Financial interests associated with certain issues are always likely to distort the debate.

It is no longer the science that speaks, but the wallet! Everyone should have to clearly specify the source of funding of their research before claiming to speak for or against some studies. In some cases, conflicts of interest are so obvious that they leave you speechless!

Note:

(1) The study was supported by the Committee for Independent Research and Information on Genetic Engineering

www.criigen.org. CRIIGEN has filed several libel suits against scientific criticisms made against this study.