Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited

Data Availability: The entire data collection process has been carried out exclusively through the Facebook Graph API which is publicly available.

Funding: Funding for this work was provided by EU FET project MULTIPLEX nr. 317532 and SIMPOL nr. 610704. The funders had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript.

Competing interests: The authors have declared that no competing interests exist.

1]. Relevance of facts, in particular when related to social relevant issues, mingle with half-truths and untruths to create informational blends [

2,

3]. In such a scenario, as pointed out by [

4], individuals can be uninformed or misinformed and the role of corrections in the diffusion and formation of biased beliefs are not effective. In particular, in [

5] online debunking campaigns have been shown to create a reinforcement effect in usual consumers of conspiracy stories. In this work, we address users consumption patterns of information using very distinct type of contents—i.e., main stream scientific news and conspiracy news. The former diffuse scientific knowledge and the sources are easy to access. The latter aim at diffusing what is neglected by

manipulated main stream media. Specifically, conspiracy theses tend to reduce the complexity of reality by explaining significant social or political aspects as plots conceived by powerful individuals or organizations. Since these kinds of arguments can sometimes involve the rejection of science, alternative explanations are invoked to replace the scientific evidence. For instance, people who reject the link between HIV and AIDS generally believe that AIDS was created by the U.S. Government to control the African American population [

6]. The spread of misinformation in such a context might be particularly difficult to detect and correct because of the social reinforcement—i.e. people are more likely to trust an information someway consistent with their system of beliefs [

7–

17]. The growth of knowledge fostered by an interconnected world together with the unprecedented acceleration of scientific progress has exposed the society to an increasing level of complexity to explain reality and its phenomena. Indeed, a shift of paradigm in the production and consumption of contents has occurred, utterly increasing the volumes as well as the heterogeneity of available to users. Everyone on the Web can produce, access and diffuse contents actively participating in the creation, diffusion and reinforcement of different narratives. Such a large heterogeneity of information fostered the aggregation of people around common interests, worldviews and narratives.

18–

20]. They are able to create a climate of disengagement from mainstream society and from officially recommended practices [

21]—e.g. vaccinations, diet, etc. Despite the enthusiastic rhetoric about the

collective intelligence [

22,

23] the role of socio-technical system in enforcing informed debates and their effects on the public opinion still remain unclear. However, the World Economic Forum listed massive digital misinformation as one of the main risks for modern society [

24].

8,

10,

25,

26]. The process of acceptance of a claim (whether documented or not) may be altered by normative social influence or by the coherence with the system of beliefs if the individual [

27,

28]. A large body of literature addresses the study of social dynamics on socio-technical systems from social contagion up to social reinforcement [

12–

15,

17,

29–

41].

42,

43] it has been shown that online unsubstantiated rumors—such as the link between vaccines and autism, the global warming induced by chem-trails or the secret alien government—and main stream information—such as scientific news and updates—reverberate in a comparable way. Pervasiveness of unreliable contents might lead to mix up unsubstantiated stories with their satirical counterparts—e.g. the presence of sildenafil-citratum (the active ingredient of Viagra™) [

44] in chem-trails or the anti hypnotic effects of lemons (more than 45000 shares on Facebook) [

45,

46]. In fact, there are very distinct groups, namely

trolls, building Facebook pages as a caricatural version of conspiracy news. Their activities range from controversial comments and posting satirical contents mimicking conspiracy news sources, to the fabrication of purely fictitious statements, heavily unrealistic and sarcastic. Not rarely, these memes became viral and were used as evidence in online debates from political activists [

47].

48–

50]. A

like stands for a positive feedback to the post; a

share expresses the will to increase the visibility of a given information; and

comment is the way in which online collective debates take form around the topic promoted by posts. Comments may contain negative or positive feedbacks with respect to the post. Our analysis starts with an outline of information consumption patterns and the community structure of pages according to their common users. We label polarized users—users which their like activity (positive feedback) is almost (95%) exclusively on the pages of one category—and find similar interaction patterns on the two communities with respect to preferred contents. According to literature on opinion dynamics [

37], in particular the one related to the Bounded confidence model (BCM) [

51]—two individuals are able to influence each other only if the distance between their opinion is below a given distance—users consuming different and opposite information tend to aggregate into isolated clusters (

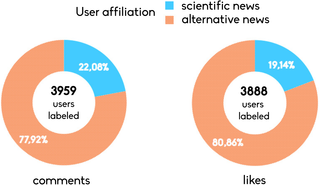

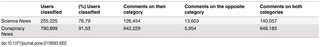

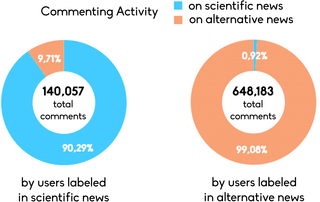

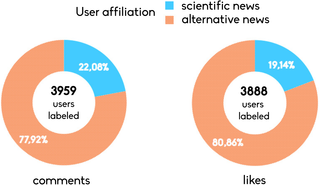

polarization). Moreover, we measure their commenting activity on the opposite category finding that polarized users of conspiracy news are more focused on posts of their community and that they are more oriented on the diffusion of their contents—i.e. they are more prone to like and share posts from conspiracy pages. On the other hand, usual consumers of scientific news result to be less committed in the diffusion and more prone to comment on conspiracy pages. Finally, we test the response of polarized users to the exposure to 4709 satirical and demential version of conspiracy stories finding that, out of 3888 users labeled on likes and 3959 on comments, the most of them are usual consumers of conspiracy stories (80.86% of likes and 77.92% of comments). Our findings, coherently with [

52–

54] indicate that the relationship between beliefs in conspiracy theories and the need for cognitive closure—i.e. the attitude of conspiracists to avoid profound scrutiny of evidence to a given matter of fact—is the driving factors for the diffusion of false claims.

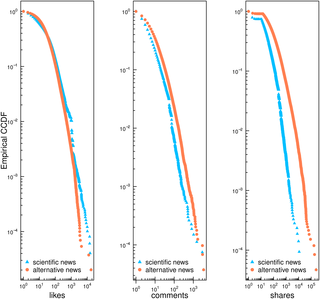

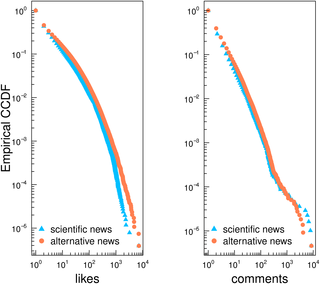

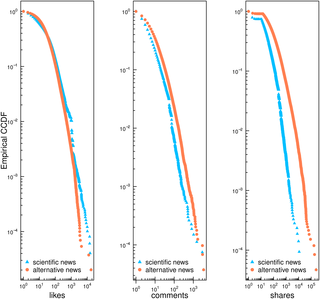

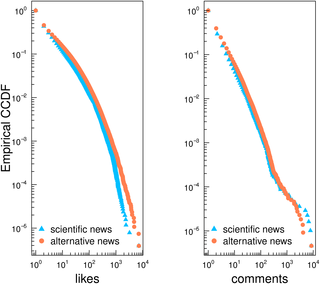

Fig. 1 shows the empirical complementary cumulative distribution function (CCDF) for likes (intended as positive feedbacks to the post), comments (a measure of the activity of online collective debates), and shares (intended as the the will to increase the visibility of a given information) for all posts produced by the different categories of pages. Distributions of likes, comments, and shares on both categories are heavy–tailed.

Download:

Fig 1. Users Activity.

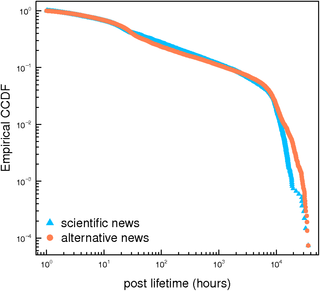

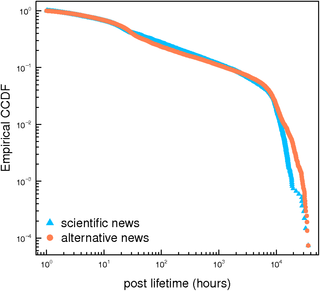

Fig. 2 we show CCDF of the posts lifetime—i.e. the temporal distance between the first and the last comment for each post from the two categories of pages. Very distinct kinds of contents have have a comparable lifetime.

Download:

Fig 2. Post lifetime.

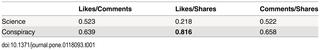

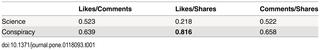

Table 1 we show the Pearson correlation for user couple of actions on posts (likes, comments and shares). As an example, a high correlation coefficient for Comments/Shares indicates that posts more commented are likely to be shared and vice versa.

Download:

Table 1. Users Actions.

52–

54] which state that conspiracists need for cognitive closure, i.e. they are more likely to interact with conspiracy based theories and have a lower trust in other information sources. Qualitatively different information are consumed in a comparable way. However, zooming in at the combination of actions we find that users of conspiracy pages are more prone to share and like on a post. Such a latter result indicates a higher level of commitment of consumers of conspiracy news. They are more oriented to the diffusion of conspiracy related topics that are—according to their system of beliefs—neglected by main stream media and scientific news and consequently very difficult to verify. Such a pattern oriented to diffusion of conspiracy news opens to interesting about the pervasiveness of unsubstantiated rumors in online social media.

Information-based communities

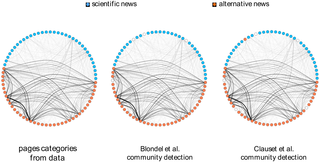

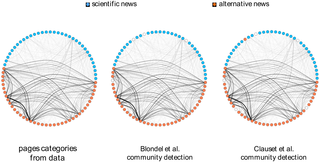

Methods section for further details and the list of pages). We want to understand if users engagement across very distinct contents shapes different communities around contents. We apply a network based approach aimed at measuring distinctive connectivity patterns of these information-based communities? i.e., users consuming information belonging to the same narrative. In particular, we transform data in order to have a bipartite network of pages and users—i.e., two pages are connected if a user liked a post from both of them. In

Fig. 3 we show the membership of pages (orange for conspiracy and azure for science). In the first panel, memberships are given according to our categorization of pages (for further details refer to the

Methods section). The second panel shows the page network with membership given by applying the multi-level modularity optimization algorithm [

55]. In the third panel, membership is obtained by applying an algorithm that looks for the maximum modularity score [

56].

Download:

Fig 3. Page Network.

50]. We consider a user to be polarized in a community when the number of his/her likes with respect to his/her total like activity on one category—scientific or conspiracy news—is higher than 95% (for further details about the algorithm refer to the

Methods section). We identify 255,225 polarized users of scientific pages—i.e., resulting t be the 76,79% of users interacted on scientific pages) and 790,899 conspiracy polarized users—i.e., the 91,53% of users interacting with conspiracy pages in terms of liking. Users activity across pages is highly polarized. According to literature on opinion dynamics [

37] in particular the one related to the Bounded Confidence Model (BCM) [

51]—two nodes are able to influence each other only if the distance between their opinions is below a given distance—users consuming different and opposite information tend to form polarized clusters. The same hold If we look at commenting activity of polarized users inside and outside their community. In particular, those users that are polarized on conspiracy news tend to interact especially in their community both in terms of comments (99,08%) and likes. Users polarized in science tend to comment slightly more outside their community (90,29%). Results are summarized in

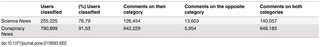

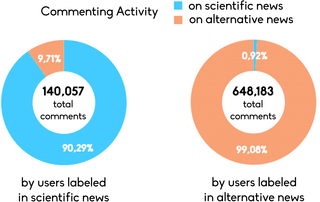

Table 2.

Download:

Table 2. Activity of polarized users.

Fig. 4 shows the CCDF for likes and comments of polarized users. Despite the very profound different nature of contents, consumption patterns are nearly the same both in terms of likes and comments. This finding indicates that very engaged users of different and clustered communities formed around different kind of narratives consume their preferred information in a similar way.

Download:

Fig 4. Consumption patterns of polarized users.

Fig. 5, polarized users of scientific news made 13,603 comments on post published by conspiracy news (9.71% of their total commenting activity), whereas polarized users of conspiracy news commented on scientific posts only 5,954 times (0.92% of their total commenting activity, i.e. roughly ten times less than polarized users of scientific news).

Download:

Fig 5. Activity and communities.

57,

58] law proposing to fund policy makers with 134 billion of euros (10% of the Italian GDP) in case of defeat in the political competition. This was an intentional joke with an explicit mention to its satirical nature. The case of Senator Cirenga became popular within online political activists and used as an argumentation in political debates [

47].

Fig. 6 shows how polarized users of both categories interact with troll posts in terms of comments and likes. We find that polarized users of conspiracy pages are more active in liking and commenting on intentionally false claims.

Download:

Fig 6. Polarized users on false information.

42,

43] has been shown that unsubstantiated claims reverberate for a timespan comparable to the one of more verified information and that usual consumers of conspiracy theories are more prone to interact with them. Conspiracy theories find on the internet a natural medium for their diffusion and, not rarely, trigger collective counter-conspirational actions [

59,

60]. Narratives grounded on conspiracy theories tend to reduce the complexity of reality and are able to contain the uncertainty they generate [

18–

20]. In this work we studied how users interact with information related to different (opposite) narratives on Facebook. Through a thresholding algorithm we label polarized users on the two categories of pages identifying well shaped communities. In particular, we measure commenting activity of polarized users on the opposite category, finding that polarized users of conspiracy news are more focused on posts of their community and their attention is more oriented to diffuse conspiracy contents. On the other hand, polarized users of scientific news are less committed in the diffusion and more prone to comment on conspiracy pages. A possible explanation for such a behavior is that the former want to diffuse what is neglected by main stream thinking, whereas the latter aims at inhibiting the diffusion of conspiracy news and proliferation of narratives based on unsubstantiated claims. Finally, we test how polarized users of both categories responded to the inoculation of 4,709 false claims produced by a parodistic page, finding polarized users of conspiracy pages to be the most active.

52–

54] indicating the existence of a relationship between beliefs in conspiracy theories and the need for cognitive closure. Those who use a more heuristic approach when evaluating evidences to form their opinions are more likely to end up with an account more consistent with their existing system of beliefs. However, anti-conspiracy theorists may not only reject evidence that points toward a conspiracy theory account, but also spend cognitive resources for seeking out evidences to debunk conspiracy theories even when these are satirical imitation of false claims. These results open to new possibilities to understand popularity of information in online social media beyond simple structural metrics. Furthermore, we show that where unsubstantiated rumors are pervasive, false rumors might easy proliferate. Next envisioned steps for our research is to look at reactions of users to different kind of information according to a more detailed classification on contents.

61], which is publicly available, and for the analysis (according to the specification settings of the API) we used only public available data (users with privacy restrictions are not included in the dataset). The pages from which we download data are public Facebook entities (can be accessed by anyone). User content contributing to such pages is also public unless the user's privacy settings specify otherwise and in that case it is not available to us.

Data collection

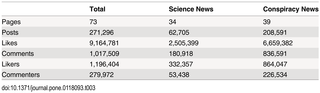

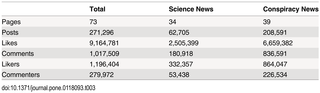

Table 3. The first category includes all pages diffusing conspiracy information—pages which disseminate controversial information, most often lacking supporting evidence and sometimes contradictory of the official news (i.e. conspiracy theories). The second category is that of scientific dissemination including scientific institutions and scientific press having the main mission to diffuse scientific knowledge.

Download:

Table 3. Breakdown of Facebook dataset.

55,

56]. The former algorithm is based on multi-level modularity optimization. Initially, each vertex is assigned to a community on its own. In every step, vertices are re-assigned to communities in a local, greedy way. Nodes are moved to the community in which they achieve the highest modularity. Differently, the latter algorithm looks for the maximum modularity score by considering all possible community structures in the network. We apply both algorithms to the bipartite projection on pages.

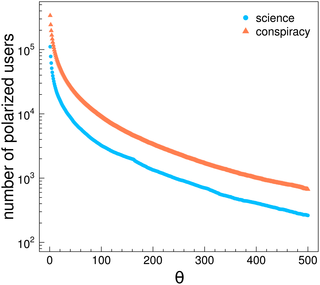

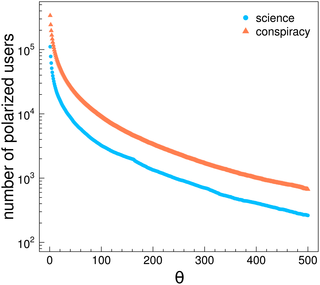

Fig. 7 we show the number of polarized users as a function of

θ. Both curves decrease with a comparable rate.

Download:

Fig 7. Polarized users and activity.

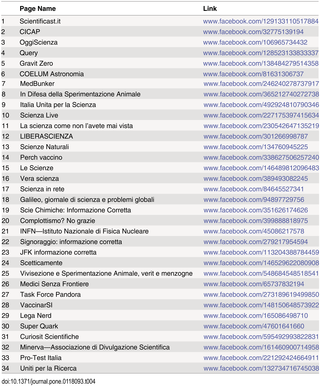

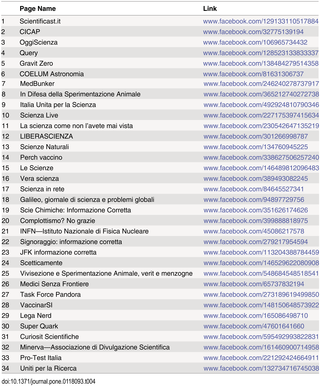

Table 4 the list of scientific news and on

Table 5 the list of conspiracy pages.

Download:

Table 4. Scientific news sources.

Download:

Table 5. Conspiracy news sources.

View Article