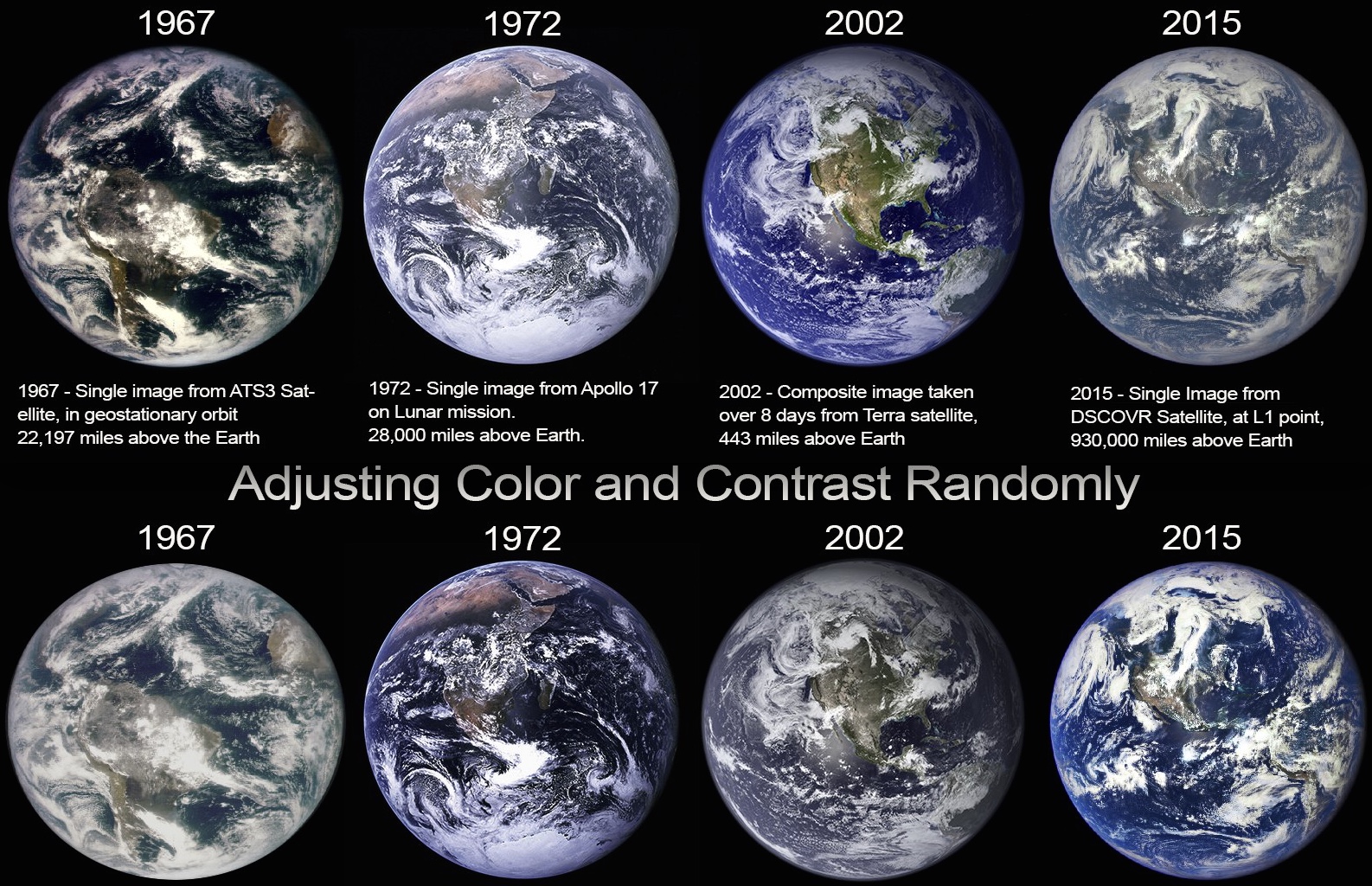

This month NASA released a new photo of the Earth from space, (the 2015 image above) taken from the DSCOVER satellite, 930,000 miles above the Earth.

Some people have claimed that this new image shows an increasingly hazy Earth, and that this is evidence of an increase in pollution, or a secret geoengineering program (using "chemtrails"). Some more extreme theorists have suggested that the image is fake because the continents (particularly North America) appear to be a different size to earlier photos.

The misconception comes from a misunderstanding about how the photos are taken. This new 2015 image is noteworthy because it's the first time since 1972 that a good quality single image photograph has been taken of the Earth. The previous last image (in 1972) was taken by an astronaut from on board the Apollo 17 spacecraft during the last manned mission to the Moon. This was the first image called the "Blue Marble", although there had been similar images taken before (such as the 1967 images taken by the ATS3 satellite), the 1972 Blue Marble image became iconic, and remains the last such image taken by an actual person.

But notice the 1972 image itself is rather hazy, especially when compared to the 2002 image, and the 1967 image seems less hazy too. Did the air alternate between clear and hazy in 1967, 1972, 2002, and 2015?

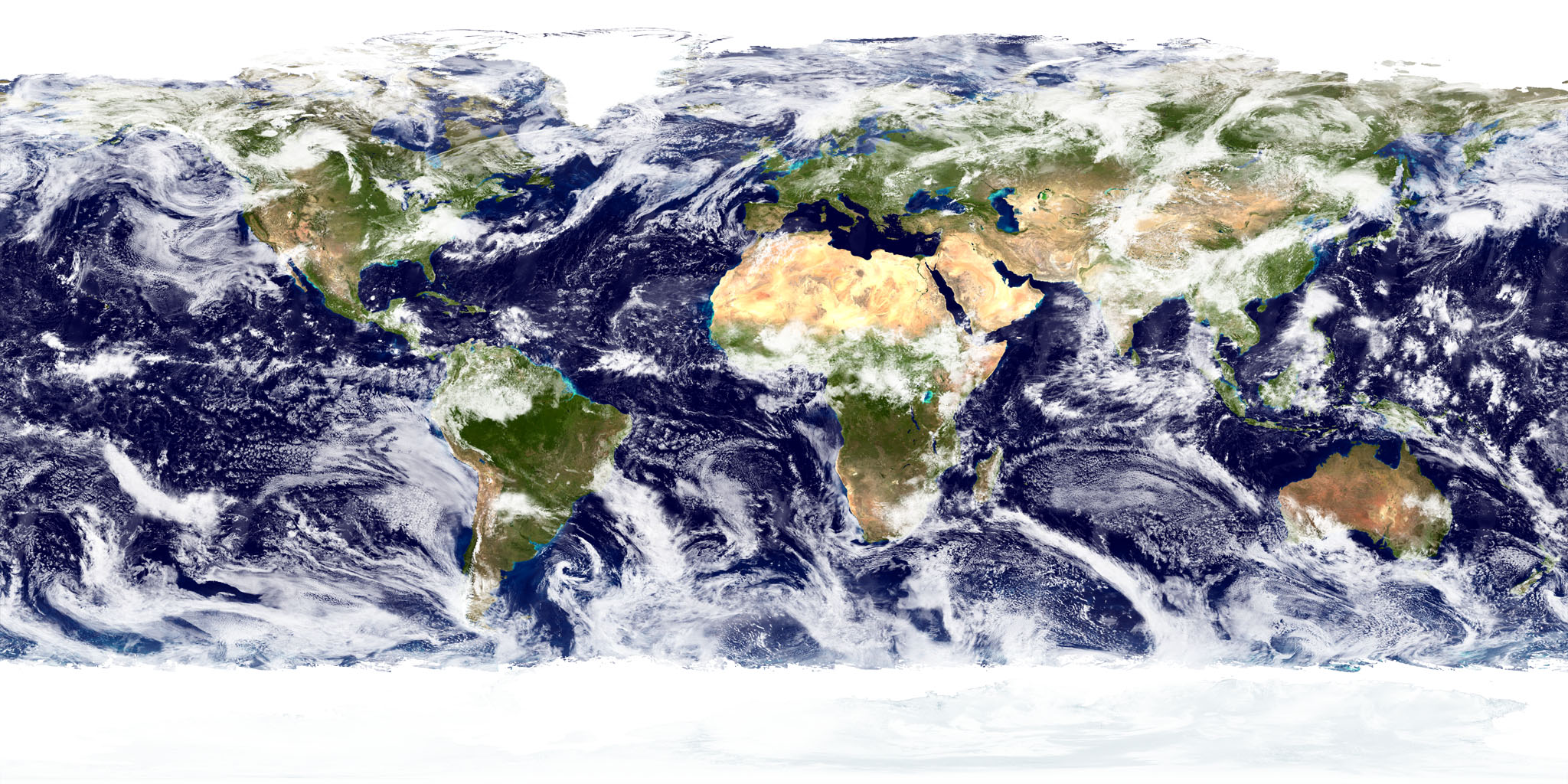

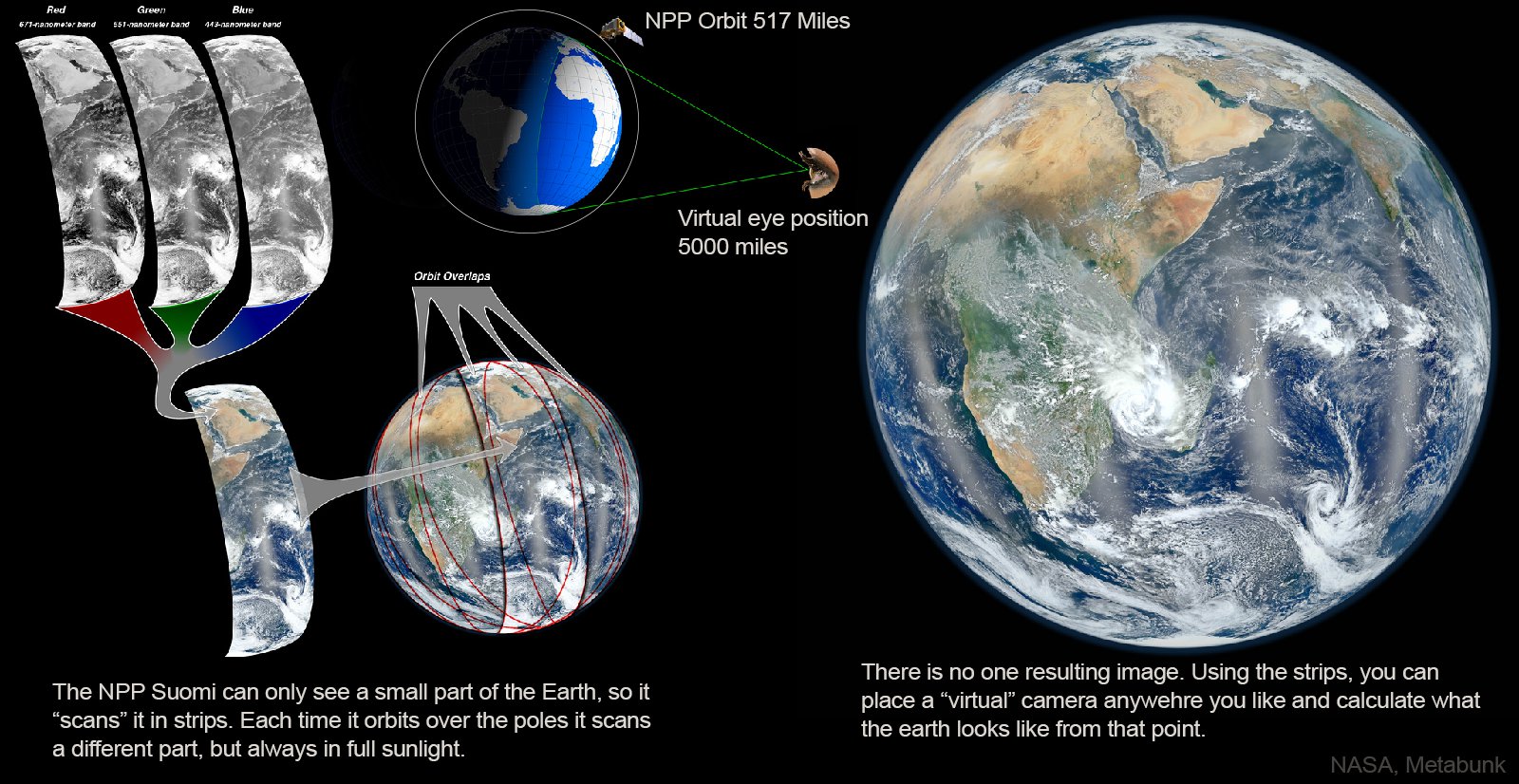

No, the difference comes down to the way the photos were taken, and what was done to them after they were taken. In particular, the bright blue 2002 image is not a photo at all. It's a composite image made of many individual photos taken by a very low orbit satellite (Terra). The images were stitched together in three dimensions, and then various projected images were generated by computer - in much the same way that Google Earth creates images of the globe from multiple satellite images. A similar image was created in 2012 with the NPP Suomi satellite.

source: http://www.nasa.gov/topics/earth/features/viirs-globe-east.html

The 1967 image is a single photograph, but taken by a very unusual camera. The ATS-3 satellite was essentially a kind of color scanner in space. It did not take photos as such, but instead "scanned" a single line across the Earth every time the satellite rotated, and then scanned another line on the next rotation, continuing for 2400 scan lines to create a complete image of the Earth. The color sensitivity was dependent on the photomultipliers, and as you can see resulted in very dark contrast, with the oceans seeming almost black.

The 1972 image was taken on a traditional film camera, and provides a more realistic look at the Earth. The contrast though is still very dependent on the type of film used, and possibly was slighly affected by being taken through a window.

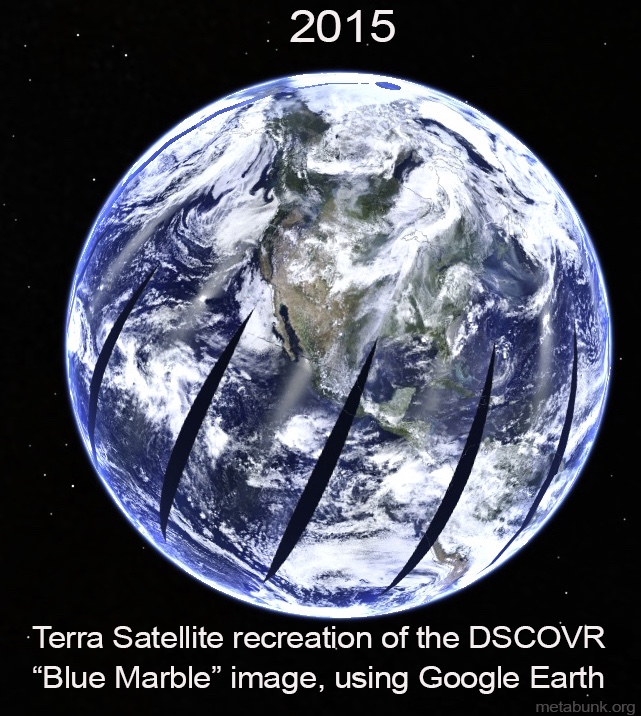

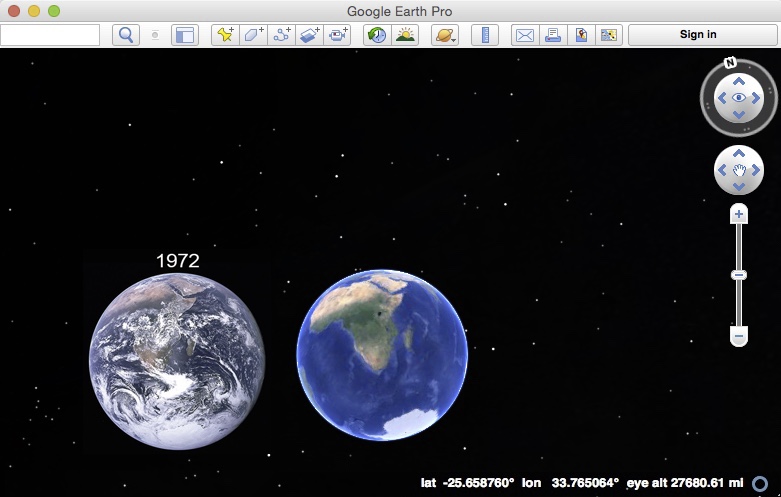

The 2002 image, as noted already, is a composite image made of several images taken with the digital camera on the Terra satellite. It's designed to look pretty. Part of this comes from the camera itself, but the contrast and color saturation has been deliberately adjusted to give the oceans an deep blue look. We can actually recreate the 2015 image with Terra images from the same day using Google Earth.

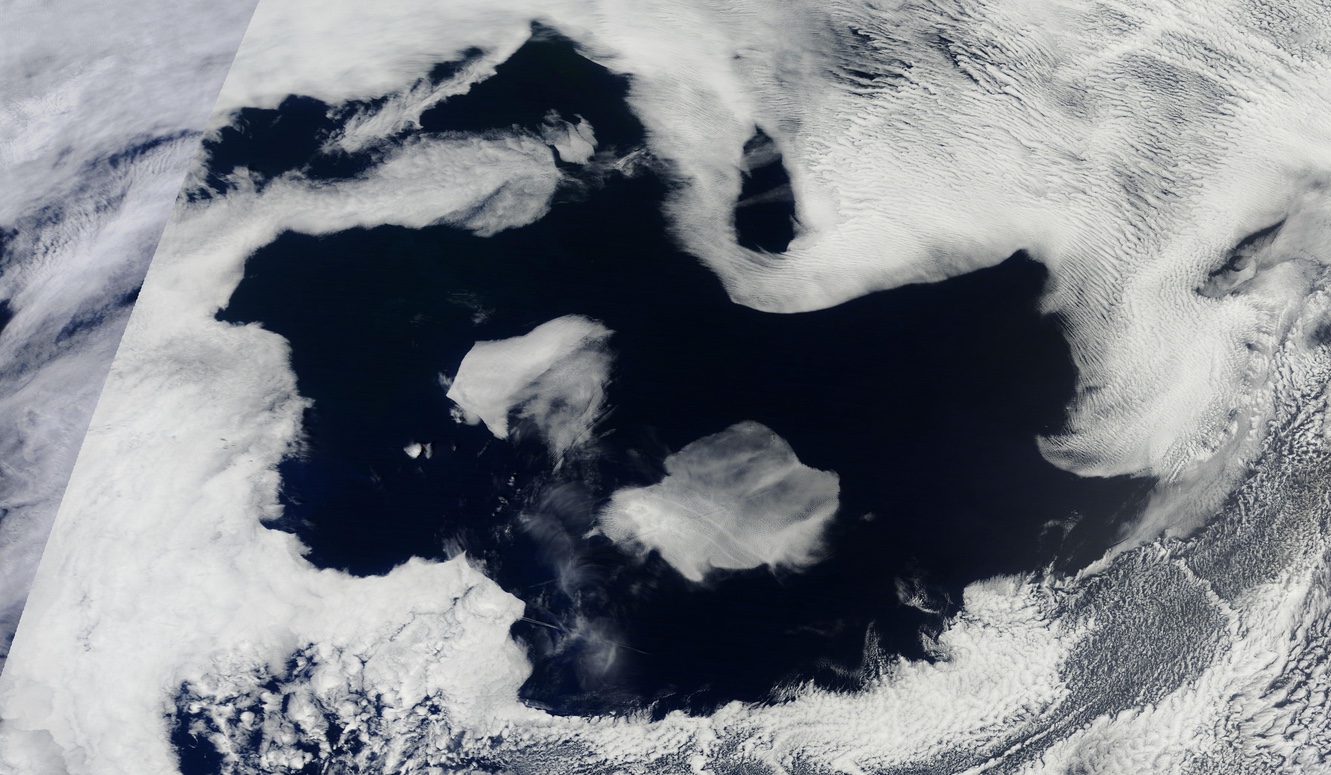

Notice this Terra recreation is higher contrast and more sturated than the DSCOVR image, even though it's essentially of the exact same scene (but taken over several hours, rather than all at once). It's also not as saturated as the 2002 composite image, which was adjusted for aesthetic reasons. The black "slashes" are there because the orbit does not cover 100% in 24 hours, which is why the composite "blue marble" is made from two of three days worth of images, so every single spot can be covered. Even more days are used to create an image free of clouds.

So why is the 2015 image slightly hazier than the 1972 images? Basically for the same reason the other images are different - they were taken with different cameras. The 1972 image was taken with a film camera, and then years later scanned into digital format. The 2015 DSCOVR image is essentially just the raw digital image taken with the on-board digital camera. As NASA explains:

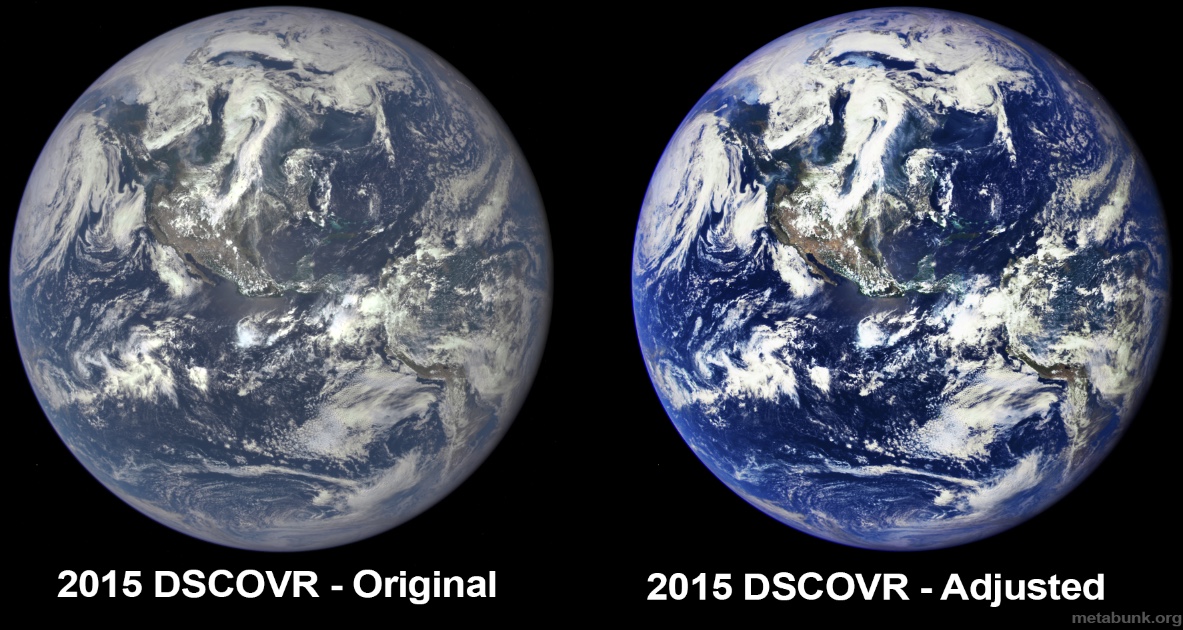

The contrast color saturation is something you can arbitrarily pick after the raw image is created. Most consumer cameras boost this to make the images look better, but the more washed out image is the more realistic. Here, for example, I've adjusted the contrast and saturation of the 2015 DSCOVR image:These initial Earth images show the effects of sunlight scattered by air molecules, giving the images a characteristic bluish tint. The EPIC team now is working on a rendering of these images that emphasizes land features and removes this atmospheric effect.

It's the same image, just adjusted to be more pleasing to the eye, in a similar way to what was done with the 2002 images.

Different cameras take different images, which will vary in color and contrast. For example, here's there photos of the same scene with the same lighting, taken with three different cameras:

Each image is unaltered, with just three default camera settings ("P" mode on the two Canon cameras). The histogram in the corner of each image shows the brightness distribution across the image. Notice the S110 has the most saturated colors with dark yellows and reds, but the iPhone has the deeper contrast in the blues and greens. The Canon 7D seems almost washed out by comparison, but it's actually the most accurate of the three. A photographer can take this, and adjust it any way they like afterward.

So given the vast difference between the camera systems of the 1967, 1972, 2002 and 2015 images, there's simply no way to make any kind of direct comparison between them. This is especially true when we don't know what post processing has been done to the image - here's a variety of post-processing applied to four different images. It totally changes which year seems "cleaner" or more "hazy". But really the Earth has not visibly changed.

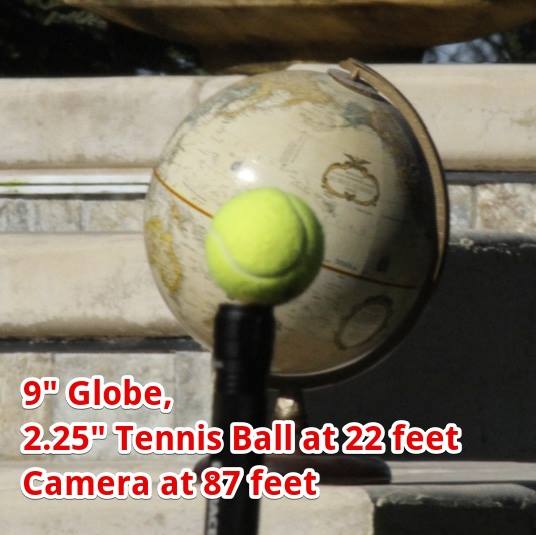

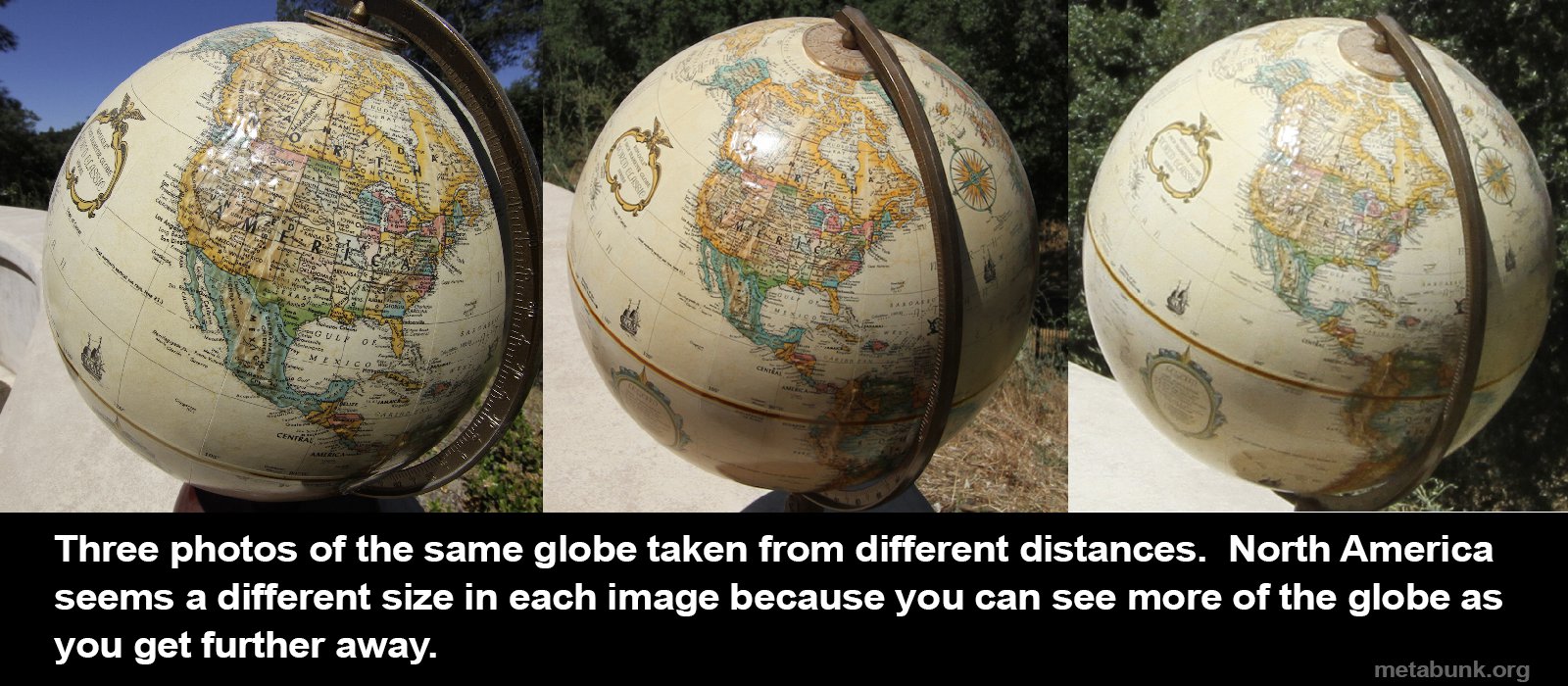

But what of the more unusual suggestion that the images are fake, because they show the continents being different sizes. Like many such things, it's all about perspective, and the way our brains work. We look at these images of the Earth, and our brain thinks of it as a flat object. You'd think if you get close to something, then it will get bigger, but not change shape. But this breaks down for three dimensional objects. If you get close to a globe, then you can see less of it, so the visible objects seem a lot bigger relative to the visible disc of the globe. The part of the globe in the middle is also a lot closer to your eye (relative to the edges) so seems bigger, like it's bulging out more than it actually is. You can verify this yourself with a household globe and your eyes (or a camera)

When the camera is just a few inches from the globe, then North America seems to take up nearly all of the hemisphere. But as the camera moves back, then you can see more of the globe, and so the true relative size can be seen.

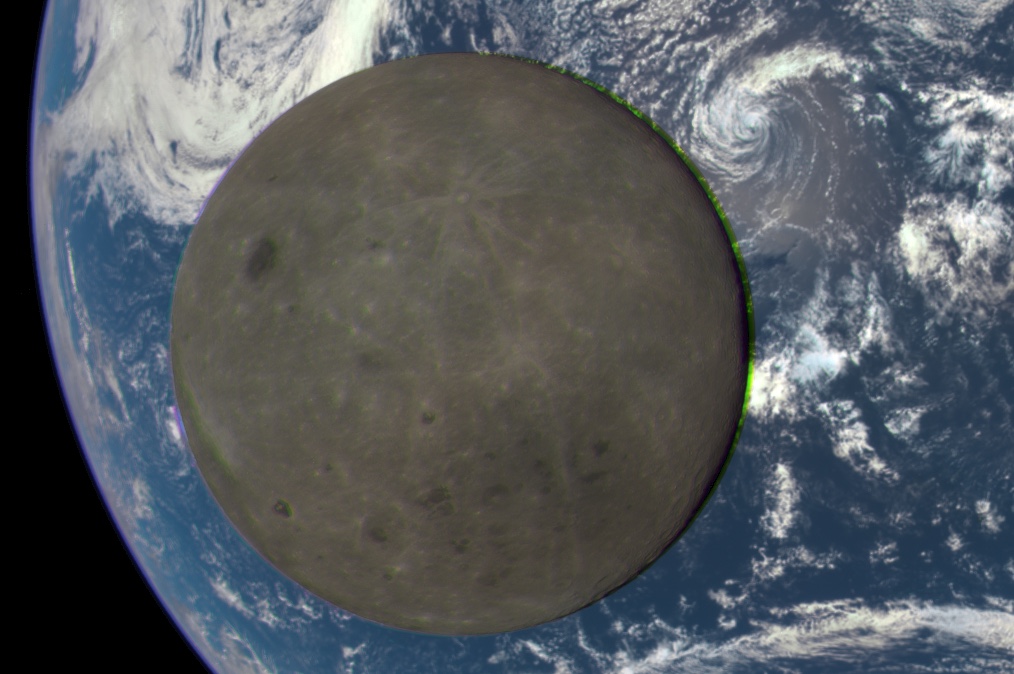

This explains why South America in the 1967 image (taken 22,000 miles away) looks bigger than South America in the 2015 image (taken 930,000 miles away). But what about the 2002 image? And what about this?

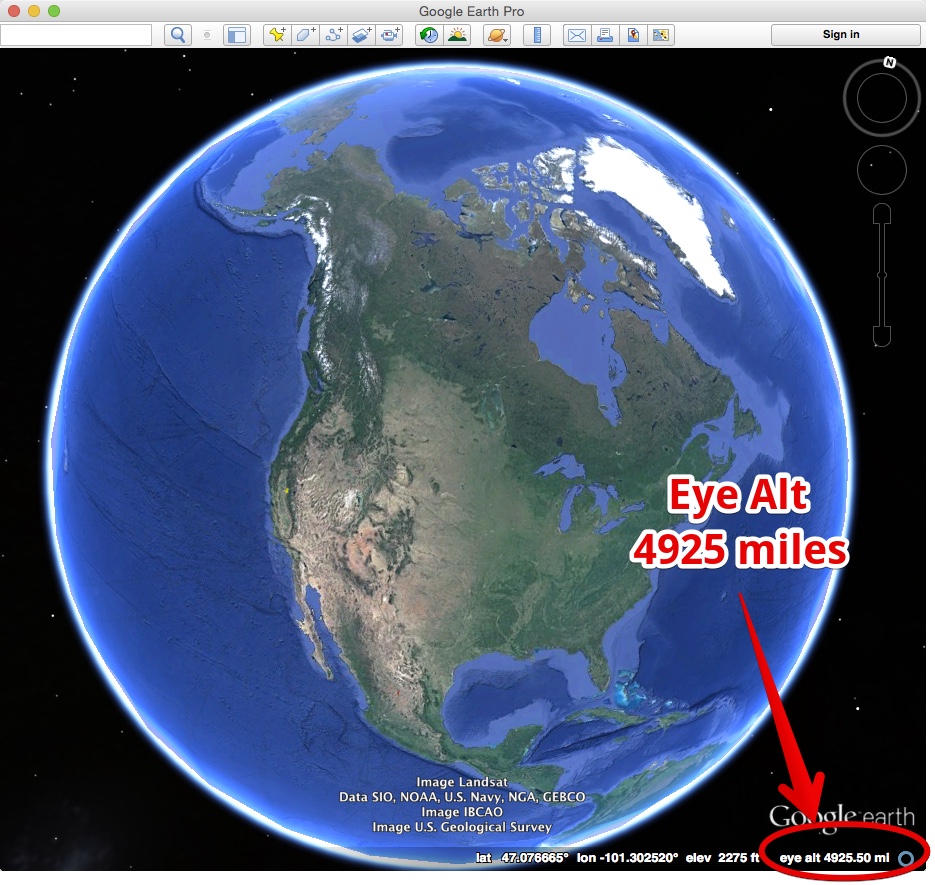

That's "Blue Marble 2012", another composite image, but this time made with the Suomi NPP satellite. Is the difference here because the Suomi satellite at a lower height compared to the Terra satellite from the 2002 images? No, the Suomi satellite at 517 miles, it actually higher than Terra, at 438 miles. And from either of those altitudes, you'd only be able to see a relatively small part of the Earth.

Remember, the composite images are not real photos, they are stitched together into 3D models, and then images are rendered in the computer. So where is the camera relative to the Earth? It's anywhere you want it to be. Since it's a virtual camera, you can position it anywhere you want, at any altitude, and then draw the view from there. For the 2012 image, they simply moved the virtual camera to a relatively low viewpoint, and then had the computer render the view from there. You can duplicate the exact same effect in Google Earth by zooming out to about 5000 miles eye altitude.

If we zoom out more, to the altitude of the 1972 Apollo image, we see the relative size of the continents match.

Last edited: